PC Games

• Orb

• Lasagne Monsters

• Three Guys Apocalypse

• Water Closet

• Blob Wars : Attrition

• The Legend of Edgar

• TBFTSS: The Pandoran War

• Three Guys

• Blob Wars : Blob and Conquer

• Blob Wars : Metal Blob Solid

• Project: Starfighter

• TANX Squadron

Tutorials

• 2D shoot 'em up

• 2D top-down shooter

• 2D platform game

• Sprite atlas tutorial

• Working with TTF fonts

• 2D adventure game

• Widget tutorial

• 2D shoot 'em up sequel

• 2D run and gun

• Roguelike

• Medals (Achievements)

• 2D turn-based strategy game

• 2D isometric game

• 2D map editor

• 2D mission-based shoot 'em up

• 2D Santa game

• 2D split screen game

• 2D quest game

• SDL 1 tutorials (outdated)

Latest Updates

The Legend of Edgar 1.38

Thu, 1st January 2026

SDL2 Quest game tutorial

Wed, 7th May 2025

SDL2 Versus game tutorial

Wed, 20th March 2024

Download keys for SDL2 tutorials on itch.io

Sat, 16th March 2024

The Legend of Edgar 1.37

Mon, 1st January 2024

Tags

• battle-for-the-solar-system (10)

• blob-wars (10)

• brexit (1)

• code (6)

• edgar (10)

• games (45)

• lasagne-monsters (1)

• making-of (5)

• match3 (1)

• numberblocksonline (1)

• orb (2)

• site (1)

• tanx (4)

• three-guys (3)

• three-guys-apocalypse (3)

• tutorials (18)

• water-closet (4)

Books

The Attribute of the Strong (Battle for the Solar System, #3)

The Pandoran War is nearing its end... and the Senate's Mistake have all but won. Leaving a galaxy in ruin behind them, they set their sights on Sol and prepare to finish their twelve year Mission. All seems lost. But in the final forty-eight hours, while hunting for the elusive Zackaria, the White Knights make a discovery in the former Mitikas Empire that could herald one last chance at victory.

— 2D platformer tutorial —

Part 1: Loading and displaying the map

Introduction

Note: this tutorial series builds upon the ones that came before it. If you aren't familiar with the previous tutorials, or the prior ones of this series, you should read those first.

This first tutorial will explain how to load and display a basic 2D map. Extract the archive, run cmake CMakeLists.txt, followed by make to build. Once compiling is finished type ./ppp01 to run the code.

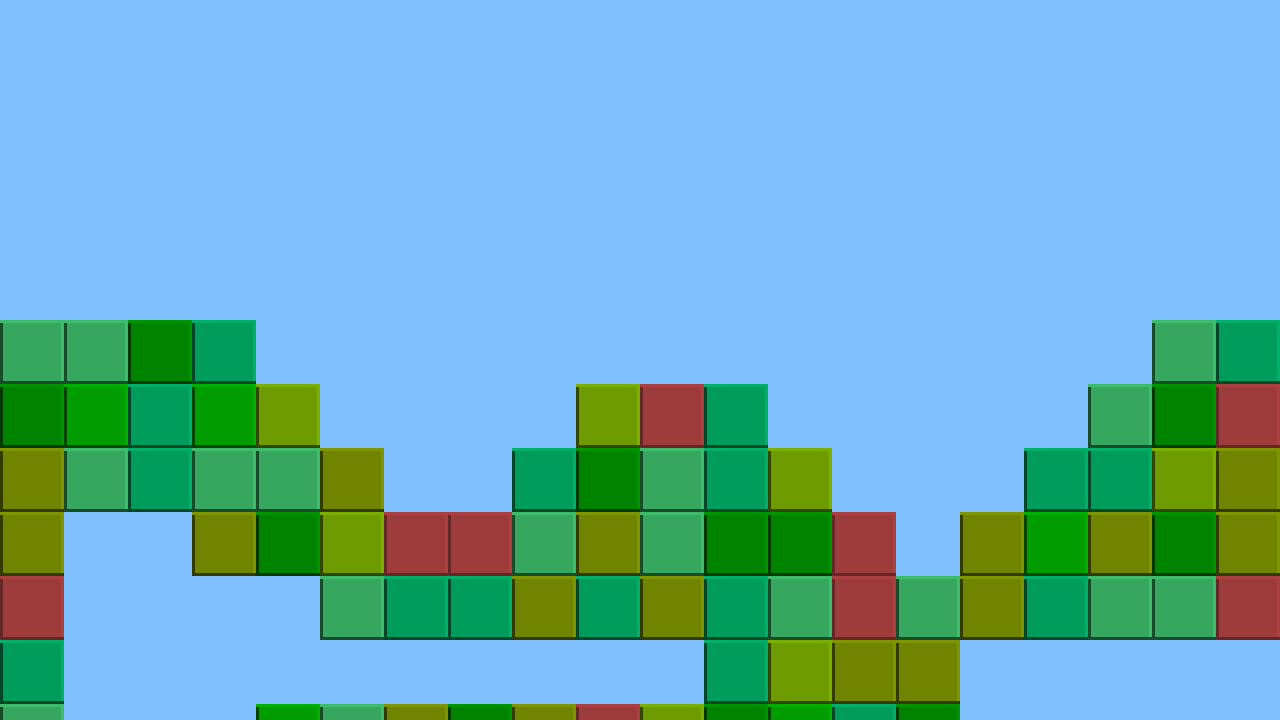

A 1280 x 720 window will open, with a series of coloured squares displayed over a light blue background. Close the window by clicking on the window's close button.

Inspecting the code

As always, we'll start by looking at the defs.h and structs.h files. First, defs.h:

#define MAX_TILES 8

#define TILE_SIZE 64

#define MAP_WIDTH 40

#define MAP_HEIGHT 20

#define MAP_RENDER_WIDTH 20

#define MAP_RENDER_HEIGHT 12

We're defining a number of things here: MAX_TILES defines the maximum number of tile types we'll support. TILE_SIZE specifies the width and height of our tile, in pixels. MAP_WIDTH and MAP_HEIGHT how wide and tall our map data is. MAP_RENDER_WIDTH and MAP_RENDER_HEIGHT define how many tiles will be drawn on screen along and x and y axis. We'll see more of these later.

Looking at structs.h, we'll consider only the Stage struct for now:

typedef struct {

int map[MAP_WIDTH][MAP_HEIGHT];

} Stage;

This struct holds our map data information in a multidimensional array called map. The width and height of this data is MAP_WIDTH and MAP_HEIGHT.

With and defs and structs setup, we can now turn our attention to the most important file - map.c. There are four functions in this file that we need to consider - initMap, drawMap, loadTiles, and loadMap. We'll start with initMap:

void initMap(void)

{

memset(&stage.map, 0, sizeof(int) * MAP_WIDTH * MAP_HEIGHT);

loadTiles();

loadMap("data/map01.dat");

}

As we can see, initMap isn't too complicated. It clears the map data using a memset, and then calls the loadTiles and loadMap functions. We'll look at both of these next, starting with loadTiles:

static void loadTiles(void)

{

int i;

char filename[MAX_FILENAME_LENGTH];

for (i = 1 ; i < MAX_TILES ; i++)

{

sprintf(filename, "gfx/tile%d.png", i);

tiles[i] = loadTexture(filename);

}

}

The loadTiles function creates a for loop, going from 1 to MAX_TILES (defined earlier as 8). It then loads a tile file from the gfx directory, based on the for loop value. The template we'll use is gfx/tile%d.png, which works out as gfx/tile1.png, gfx/tile2.png, etc.

Next, we'll look at our loadMap function. This function accepts one argument - the filename to use:

static void loadMap(const char *filename)

{

char *data, *p;

int x, y;

data = readFile(filename);

p = data;

for (y = 0 ; y < MAP_HEIGHT ; y++)

{

for (x = 0 ; x < MAP_WIDTH ; x++)

{

sscanf(p, "%d", &stage.map[x][y]);

do {p++;} while (*p != ' ' && *p != '\n');

}

}

free(data);

}

To load the map data, we call a function called readFile, passing over our filename. This function returns a char array, containing the map data (which we point to using a variable called data). We create a pointer to our map data called p, then loop through the map's rows (MAP_HEIGHT) and columns (MAP_WIDTH). We call sscanf to get the next number, setting it into stage.map[x][y]. We then increment our p pointer until it hits a character that is not a space or a new line. Our map data is space separated as well as new line separated, which is why we use these as the deliminators. We also use a pointer to the character data as it's faster when reading (especially for very big maps). Finally, we free our map data to prevent memory leaks.

Drawing our map is very straightforward, as we can see in the drawMap function:

void drawMap(void)

{

int x, y, n;

for (y = 0 ; y < MAP_RENDER_HEIGHT ; y++)

{

for (x = 0 ; x < MAP_RENDER_WIDTH ; x++)

{

n = stage.map[x][y];

if (n > 0)

{

blit(tiles[n], x * TILE_SIZE, y * TILE_SIZE, 0);

}

}

}

}

Drawing the map is simply a case of looping through the rows and columns of our map data and blitting the relevant tiles. We limit our ranges to MAP_RENDER_HEIGHT and MAP_RENDER_WIDTH, so that we don't draw the entire map all the time. When it comes to selecting the tile to use, the number held in our array will represent the tile texture. We test to see if the value of the tile is greater than 0 (0 being considered an empty space) and blit it, multiplying our x and y values by TILE_SIZE to position them in the correct place.

Bringing everything together, we want to initialize our map at the same time as our stage, in the initStage function in stage.c:

void initStage(void)

{

app.delegate.logic = logic;

app.delegate.draw = draw;

initMap();

}

We'll call drawMap from the draw function in stage, first filling the screen with a light blue to simulate a nice day.

static void draw(void)

{

SDL_SetRenderDrawColor(app.renderer, 128, 192, 255, 255);

SDL_RenderFillRect(app.renderer, NULL);

drawMap();

}

Before we finish up, let's look at the readFile function:

char *readFile(const char *filename)

{

char *buffer = 0;

unsigned long length;

FILE *file = fopen(filename, "rb");

if (file)

{

fseek(file, 0, SEEK_END);

length = ftell(file);

fseek(file, 0, SEEK_SET);

buffer = malloc(length + 1);

memset(buffer, 0, length + 1);

fread(buffer, 1, length, file);

fclose(file);

buffer[length] = '\0';

}

return buffer;

}

The file is opened and the length determined by calling fseek and passing in SEEK_END. Knowing the length, we malloc a char array of length + 1, to allow room for the null terminator at the end. The entire file is read with fread, closed, and the buffered returned.

As can be seen, loading and displaying our map data is rather easy. There is a problem, however: our map is static and can't be scrolled around. In the next tutorial, we'll look at scrolling the map so that the entire thing can be shown.

Purchase

The source code for all parts of this tutorial (including assets) is available for purchase:

From itch.io

It is also available as part of the SDL2 tutorial bundle: